Approve AI in Production

Know what your AI agents can do before you approve them in production.

AI agents are already reaching production systems and external services. The question is whether you have evidence of what they run under, what they can reach, where permissions drifted, and what to remediate before broader rollout.

Start 30-Day EvaluationStandard identity governance wasn't built for this.

No human principal. No traditional access request. No lifecycle tied to an employee.

External reach — to LLM endpoints, AI services, third-party APIs — was often never part of the original security review.

What the platform surfaces

Already applies to what you're running today.

- → Existing AI agents or workflows now reaching AI endpoints

- → Service accounts whose scope has grown over time

- → AI agents or workflows whose owners have departed

Approve AI in production with evidence-based security review.

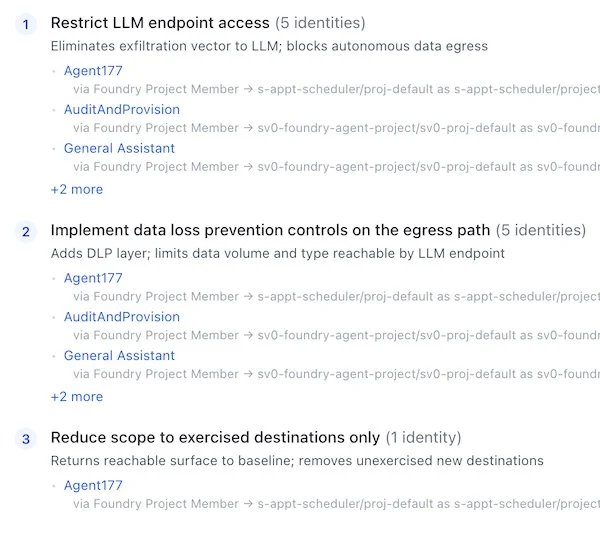

Findings your team can act on immediately.

Prioritized by real impact

Every finding tied to a specific AI agent, identity, and execution path — with the evidence to prove it

Remediation guidance included

Structural actions that reduce exposure without disrupting running processes

Workflow-ready format

Structured for direct handoff into ServiceNow, JIRA, or your existing remediation process

Executive-ready evidence

One-line business risk statements. Audit-grade documentation for leadership and compliance

This is relevant if…

- You are deploying AI agents into production and need to validate access scope before approval

- Existing agents or workflows now reach LLM or AI endpoints that were not part of their original design

- You need evidence of which agent paths have external egress before an audit or review

Approve AI in production with evidence.

Know what your AI agents can do before you approve them in production.

Start 30-Day Evaluation